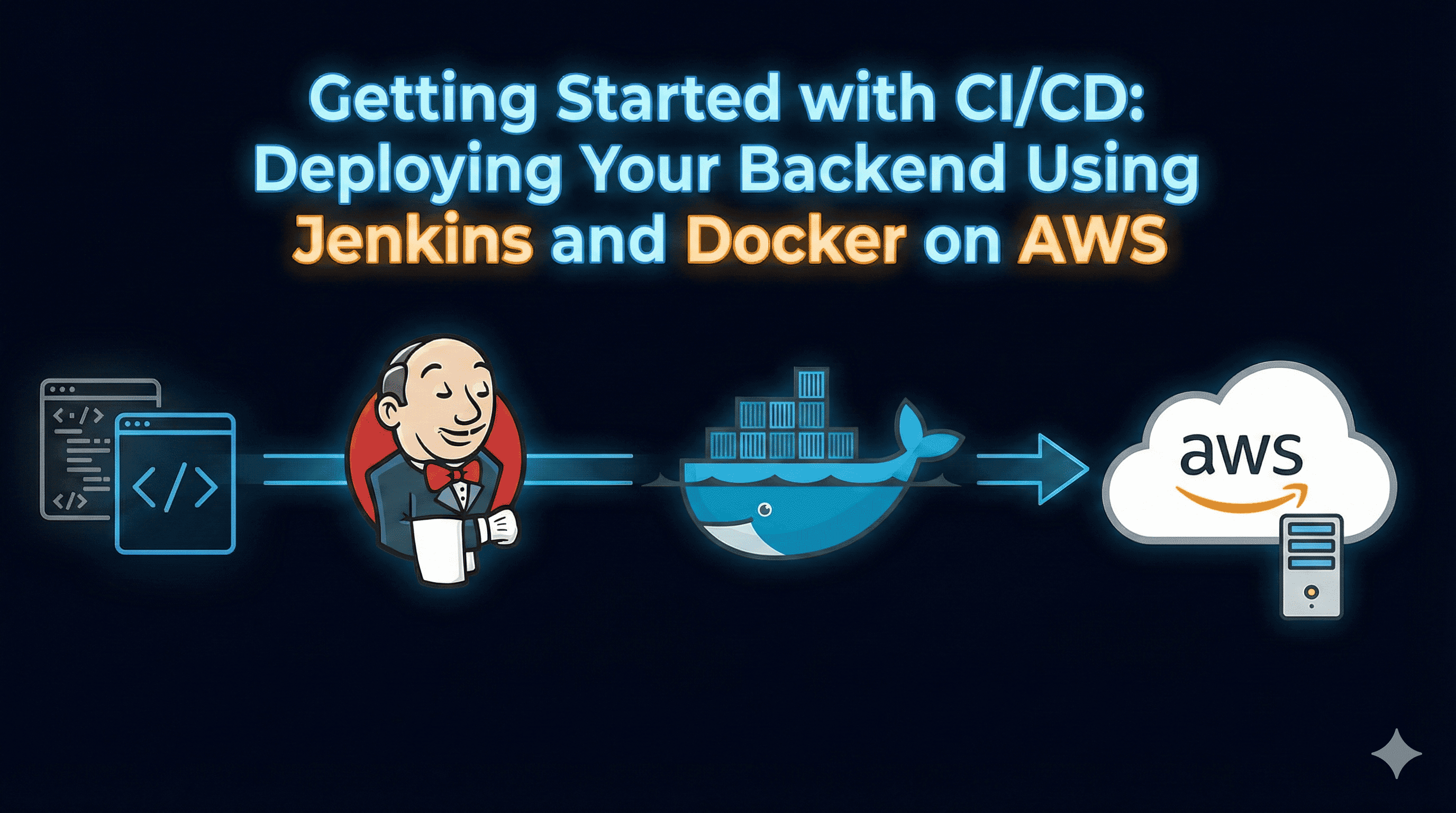

Getting Started with CI/CD

Introduction

In modern software development, shipping code quickly and reliably is just as important as writing the code itself. This is where CI/CD comes into play. Continuous Integration (CI) ensures that every code change is tested and validated automatically, while Continuous Deployment (CD) makes sure that successful changes are delivered to production without manual effort. Together, they allow teams to release features faster, reduce human errors, and maintain a predictable development workflow.

When it comes to implementing CI/CD for backend applications, Jenkins, Docker, and AWS form a powerful and production ready combination.

Jenkins provides a highly flexible automation engine that can run pipelines, integrate with GitHub, and orchestrate deployments.

Docker ensures your application runs in a consistent environment across development, testing, and production.

AWS offers scalable and reliable infrastructure with services like EC2 for hosting and ECR for storing your container images.

In this guide, you will learn how to build a complete CI/CD pipeline where every code update triggers an automated workflow:

Code Push → Jenkins Pipeline → Docker Image Build → Push to Amazon ECR → Deploy on Amazon EC2

By the end of this blog, you will have a fully automated deployment setup for your backend application using Jenkins Pipeline, Docker, Amazon ECR, and EC2.

Prerequisites

Before we begin, make sure you have the following tools and configurations ready. These will ensure a smooth setup of the CI/CD pipeline using Jenkins, Docker, Amazon ECR, and EC2.

1. AWS Account

You need an active AWS account to create:

EC2 instance (to host Jenkins and deploy backend)

ECR repository (to store Docker images)

IAM user/role with proper permissions

2. EC2 Instance (Ubuntu Recommended)

Launch an EC2 instance with:

Ubuntu 20.04 or 22.04

t2.micro (Free-tier) or higher

Open the following ports in the Security Group:

22 → SSH

8080 → Jenkins

3000/8081 → Backend application port

80 / 443 (optional if using Nginx or HTTPS)

3. SSH Key Pair

A key pair (.pem file) is required to:

SSH into your EC2 instance

Allow Jenkins pipeline to log into EC2 for deployment

4. GitHub Repository

You need a backend project hosted on GitHub containing:

Source code

Dockerfileat the root folderJenkinsfile (or plan to add it during this guide)

Supported backend languages include Node.js, Java, Python, Go, etc.

5. Docker Installed on EC2

Docker must be installed on your EC2 instance where the backend will run.

Jenkins will also use Docker to:

Build the image

Tag and push it to ECR

Redeploy it on EC2

6. Jenkins Installed on EC2

A running Jenkins server with:

Admin access

Required plugins (Git, Docker Pipeline, Amazon ECR, SSH Agent)

7. AWS CLI Installed

AWS CLI is needed on the Jenkins server to authenticate with Amazon ECR.

8. Basic Knowledge Requirements

To follow this guide smoothly, you should have:

Basic Linux/terminal knowledge

Understanding of Git workflow

Familiarity with Docker basics (build, run, push)

Minimal understanding of AWS EC2 and ECR

Step 1: Set Up Your EC2 Instance

To run Jenkins and deploy your backend application, we’ll use an Amazon EC2 instance. This instance will act as both your CI/CD server (Jenkins) and your deployment target.

1.1 Launch an EC2 Instance

Follow these steps in the AWS Console:

Go to EC2 → Instances → Launch Instance

Choose an AMI:

- Ubuntu Server LTS (recommended for stability and Docker support)

Select Instance Type:

t2.micro (free-tier eligible)

You can choose t2.small or higher if your Jenkins workload is heavier.

Create or select an SSH Key Pair (.pem file)

Configure Security Group with the following inbound rules:

| Port | Purpose | | --- | --- | | 22 | SSH Access | | 8080 | Jenkins dashboard | | 3000 / 8081 | Backend application (use whichever your app exposes) | | 80 / 443 | Optional: If using Nginx or HTTPS |

Launch the instance.

1.2 Connect to EC2 via SSH

Use your terminal or PowerShell:

ssh -i your-key.pem ubuntu@<EC2-PUBLIC-IP>

Replace <EC2-PUBLIC-IP> with the public IPv4 address of your instance.

1.3 Update the Server

Once you're logged in, update system packages:

sudo apt update && sudo apt upgrade -y

1.4 Install Essential Tools

Install basic tools needed later:

sudo apt install -y git curl unzip

1.5 Understanding What This EC2 Will Do

This single EC2 instance will be responsible for:

Running Jenkins (CI/CD server)

Using Docker to build and push images to ECR

Deploying and running your backend application in a Docker container

If you want to separate Jenkins from the deployment server, you can use two EC2 machines but for learning and medium sized projects, one is enough.

Step 2: Install Docker on EC2

Docker is essential because both Jenkins and your EC2 server will use it to build, manage, and run your backend application inside containers.

Follow these steps to install Docker and prepare your EC2 instance for container-based deployments.

2.1 Install Docker Engine

Run the following commands to install Docker:

sudo apt update

sudo apt install -y ca-certificates curl gnupg lsb-release

Add Docker’s official GPG key:

sudo mkdir -m 0755 -p /etc/apt/keyrings

curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo gpg --dearmor -o /etc/apt/keyrings/docker.gpg

Add Docker repository:

echo \

"deb [arch=$(dpkg --print-architecture) signed-by=/etc/apt/keyrings/docker.gpg] https://download.docker.com/linux/ubuntu \

$(lsb_release -cs) stable" | sudo tee /etc/apt/sources.list.d/docker.list > /dev/null

Install Docker Engine:

sudo apt update

sudo apt install -y docker-ce docker-ce-cli containerd.io

2.2 Verify Docker Installation

Run:

docker --version

And test Docker:

sudo docker run hello-world

If you see the "Hello from Docker!" message, installation is successful.

2.3 Allow Non-Root Users to Use Docker

Add the ubuntu user to the Docker group:

sudo usermod -aG docker ubuntu

Also add the jenkins user (after Jenkins installation):

sudo usermod -aG docker jenkins

Apply the changes:

newgrp docker

2.4 Enable Docker to Start on Boot

sudo systemctl enable docker

sudo systemctl start docker

2.5 Confirm Docker Permissions

Verify that Docker can run without sudo:

docker ps

Now Docker is fully set up on your EC2 server and ready to be used by Jenkins for image building and deployment.

Step 3: Install Jenkins on EC2 Using Docker

Instead of installing Jenkins manually with packages, you’ll run Jenkins as a Docker container. This method is cleaner, easier to update, and keeps your EC2 instance lightweight.

We will use the official Jenkins LTS Docker image.

3.1 Create a Directory for Jenkins Data

To ensure Jenkins data (jobs, plugins, configs) is not lost when the container restarts, create a persistent volume:

mkdir -p ~/jenkins_home

sudo chown -R 1000:1000 ~/jenkins_home

Jenkins inside Docker runs as user 1000, so we give it permission.

3.2 Run Jenkins Docker Container

Run the Jenkins container mapped to port 8080:

docker run -d \

--name jenkins \

-p 8080:8080 \

-p 50000:50000 \

-v ~/jenkins_home:/var/jenkins_home \

jenkins/jenkins:lts

What each parameter means:

-p 8080:8080→ Jenkins UI-p 50000:50000→ For Jenkins agent communication-v ~/jenkins_home:/var/jenkins_home→ Persistent Jenkins storagejenkins/jenkins:lts→ Stable Jenkins version

3.3 Install Docker Inside Jenkins Container (Important)

Since Jenkins will build Docker images, the container must have access to Docker.

Give Jenkins container access to Docker daemon:

Stop Jenkins container:

docker stop jenkinsRe-run Jenkins with Docker socket mounted:

docker run -d \ --name jenkins \ -p 8080:8080 \ -p 50000:50000 \ -v ~/jenkins_home:/var/jenkins_home \ -v /var/run/docker.sock:/var/run/docker.sock \ jenkins/jenkins:lts

Now Jenkins can run docker commands directly using the host’s Docker engine.

3.4 Access Jenkins UI

Open in your browser:

http://EC2_PUBLIC_IP:8080

3.5 Retrieve Jenkins Initial Password

Inside the container, run:

docker exec -it jenkins cat /var/jenkins_home/secrets/initialAdminPassword

Copy the password and paste it into the Jenkins setup screen.

3.6 Install Recommended Plugins

After login:

Select Install suggested plugins

Wait for Jenkins to install everything

Create your admin user

Finish setup

3.7 Add Jenkins User to Docker Group (Already Covered)

Although Jenkins now uses the host Docker socket, ensure correct permissions:

sudo usermod -aG docker jenkins

Restart Jenkins container:

docker restart jenkins

3.8 Verify Docker From Jenkins

Inside the Jenkins container:

docker exec -it jenkins docker --version

If it prints a version, Jenkins can run Docker.

Jenkins is now fully configured inside Docker and ready to run CI/CD pipelines.

Step 4: Install Required Plugins in Jenkins

To build a complete CI/CD pipeline that deploys your backend using Docker and Amazon ECR, Jenkins requires a few essential plugins. These plugins provide Git integration, Docker commands, ECR authentication, and SSH deployment capabilities.

4.1 Access Plugin Manager

Go to Jenkins Dashboard → Manage Jenkins → Manage Plugins

Switch to the Available tab

Search and install the following plugins (you can select multiple):

4.2 Essential Plugins

| Plugin | Purpose |

| Git Plugin | Pull code from GitHub or other Git repositories |

| Pipeline | Enable pipeline jobs using Jenkinsfile |

| Docker Pipeline | Build and push Docker images inside Jenkins pipeline |

| Amazon ECR | Authenticate and push Docker images to Amazon ECR |

| SSH Agent | Allows Jenkins to SSH into EC2 for deployments |

| Credentials Binding Plugin | Safely store and use credentials in pipelines |

4.3 Restart Jenkins (Optional)

Some plugins may require a restart. Jenkins will prompt you if necessary. You can also restart using Docker:

docker restart jenkins

4.4 Configure Credentials in Jenkins

You need to securely store the following credentials:

AWS Access Key & Secret Key

Go to Manage Jenkins → Credentials → System → Global credentials → Add Credentials

Kind: Username with password or AWS Credential (depends on plugin)

ID:

aws-ecr-credentials(used in Jenkinsfile)

EC2 SSH Key

Add your

.pemkey as SSH Username with private keyID:

ec2-key(used for deployment stage)

Once these plugins and credentials are set, Jenkins is fully ready to run pipeline jobs that build Docker images, push them to Amazon ECR, and deploy them on EC2.

Step 5: Create Amazon ECR Repository

Amazon Elastic Container Registry (ECR) is a fully managed Docker container registry that allows you to store, manage, and deploy Docker images. In this step, we’ll create a repository for your backend Docker images.

5.1 Create a New ECR Repository

Log in to the AWS Management Console.

Go to Services → ECR → Repositories → Create Repository.

Configure the repository:

Name:

backend-service(or any name you prefer)Visibility: Private (recommended for production)

Tags: Optional

Click Create Repository.

5.2 Note the Repository URI

After creation, you will see the Repository URI. It looks like:

<aws_account_id>.dkr.ecr.<region>.amazonaws.com/backend-service

You’ll need this URI in your Jenkins pipeline to tag and push Docker images.

5.3 Configure AWS IAM User or Role

Jenkins needs AWS credentials to authenticate and push Docker images to ECR.

Go to IAM → Users → Add User

Access type: Programmatic access (for AWS CLI)

Attach policies:

AmazonEC2ContainerRegistryFullAccessAmazonEC2FullAccess(optional if Jenkins deploys directly to EC2)

Copy Access Key ID and Secret Access Key

5.4 Add AWS Credentials to Jenkins

Go to Jenkins Dashboard → Manage Jenkins → Credentials → System → Global credentials → Add Credentials

Select Kind: AWS Credentials (or Username/Password)

Enter Access Key and Secret Key

Set ID as

aws-ecr-credentials(you’ll use this in the Jenkinsfile)

Once the ECR repository and credentials are ready, Jenkins can push Docker images from your CI/CD pipeline to Amazon ECR.

5.5 Add GitHub Credentials in Jenkins

Go to Jenkins Dashboard → Manage Jenkins → Credentials → System → Global credentials → Add Credentials

Choose Kind:

Username with password(or Personal Access Token if using HTTPS)Enter:

Username: Your GitHub username

Password: Your GitHub password or Personal Access Token (PAT)

Set ID:

github-credentials

If your repository is private, Jenkins will need these credentials to clone the repo.

5.6 EC2 SSH Key (ec2-key)

When you launch your EC2 instance, you create a .pem key pair. This key allows secure SSH access to the instance.

To let Jenkins deploy the backend automatically, you need to add this key in Jenkins:

Steps:

Go to Jenkins Dashboard → Manage Jenkins → Credentials → System → Global credentials → Add Credentials

Choose Kind:

SSH Username with private keyFill in the details:

Username:

ubuntu(default for Ubuntu EC2)Private Key: Enter directly or upload your

.pemfileID:

ec2-key(used in Jenkinsfile)

Click OK to save.

Step 6: Write Your Dockerfile & Docker Compose for Backend

Note: You can clone this repository for reference and follow along with the examples in this blog. It contains the full backend project, Dockerfile, and Docker Compose setup.

In this step, we will containerize a Node.js backend that uses MongoDB. We’ll use a Dockerfile for the backend service and Docker Compose to orchestrate both backend and database containers together.

6.1 Dockerfile for the Backend

FROM node:18-alpine

WORKDIR /usr/src/app

COPY package*.json ./

RUN npm install

COPY . .

EXPOSE 5000

CMD ["npm", "start"]

Explanation:

Base Image:

node:18-alpineprovides a lightweight Node.js environment.WORKDIR: Sets the working directory inside the container.

COPY + RUN: Installs dependencies using

npm install.EXPOSE 5000: Exposes port 5000 for the backend.

CMD: Starts the backend using

npm start.

6.2 Docker Compose for Multi Container Setup

version: '3.8'

services:

app:

build: .

ports:

- "5000:5000"

environment:

- MONGODB_URI=mongodb://mongo:27017/express-mongo-app

- PORT=5000

depends_on:

- mongo

restart: unless-stopped

networks:

- app-network

mongo:

image: mongo:6.0

ports:

- "27017:27017"

volumes:

- mongodb_data:/data/db

networks:

- app-network

networks:

app-network:

driver: bridge

volumes:

mongodb_data:

Explanation:

app service:

Builds the backend from the Dockerfile.

Connects to MongoDB using

MONGODB_URI.Automatically restarts unless manually stopped.

mongo service:

Uses official MongoDB image.

Persists data using a Docker volume (

mongodb_``data).

Networks: Both services are connected to

app-networkso the backend can reach MongoDB by hostnamemongo.Volumes: Ensures MongoDB data is not lost when containers restart.

6.3 Run the Multi-Container Setup

Run the following command locally to test:

docker-compose up --build

Backend will be available at

http://localhost:5000MongoDB will be accessible internally at

mongo:27017

This setup ensures your backend and database run together in isolated containers. Later, the Jenkins pipeline will automate building and deploying this setup to EC2.

Step 7: Create a Jenkins Pipeline Job and Jenkinsfile

Now that your Dockerfile and Docker Compose setup are ready, we will create a Jenkins Pipeline that automates the process of building, pushing, and deploying your backend application.

7.1 Create a New Pipeline Job in Jenkins

Open your Jenkins dashboard:

http://<EC2_PUBLIC_IP>:8080Click New Item → Enter Job Name (e.g.,

Backend-CI-CD) → Select Pipeline → Click OKScroll down to Pipeline section → Choose Pipeline script from SCM

SCM: Git

Repository URL:

https://github.com/your-username/your-repo.gitBranch:

mainScript Path:

Jenkinsfile

Jenkins will now fetch the Jenkinsfile from your repo and execute it for each build.

7.2 Example Jenkinsfile

This Jenkinsfile will:

Checkout code from GitHub

Login to Amazon ECR

Build Docker image

Push image to ECR

Deploy backend on EC2

pipeline {

agent any

environment {

AWS_REGION = "ap-south-1"

ECR_REPO = "your_account_id.dkr.ecr.ap-south-1.amazonaws.com/backend-service"

}

stages {

steps {

git branch: 'main',

url: 'https://github.com/your-username/your-repo.git',

credentialsId: 'github-credentials'

}

stage('Login to ECR') {

steps {

withCredentials([[$class: 'AmazonWebServicesCredentialsBinding', credentialsId: 'aws-ecr-credentials']]) {

sh "aws ecr get-login-password --region $AWS_REGION | docker login --username AWS --password-stdin $ECR_REPO"

}

}

}

stage('Build Docker Image') {

steps {

sh "docker build -t backend-service ."

}

}

stage('Tag Docker Image') {

steps {

sh "docker tag backend-service:latest $ECR_REPO:latest"

}

}

stage('Push to ECR') {

steps {

sh "docker push $ECR_REPO:latest"

}

}

stage('Deploy to EC2') {

steps {

sshagent(credentials: ['ec2-key']) {

sh """

ssh -o StrictHostKeyChecking=no ubuntu@<EC2_PUBLIC_IP> '

docker pull $ECR_REPO:latest &&

docker stop backend || true &&

docker rm backend || true &&

docker run -d -p 5000:5000 --name backend $ECR_REPO:latest

'

"""

}

}

}

}

}

7.3 Key Points of This Pipeline

Checkout Code: Pulls the latest code from GitHub.

Login to ECR: Uses AWS CLI to authenticate Docker with Amazon ECR.

Build & Tag Docker Image: Builds backend container and tags it with the ECR repository URI.

Push to ECR: Pushes the Docker image to your private ECR repository.

Deploy to EC2: SSH into the EC2 server, stop any running container, remove it, pull the new image, and start it.

7.4 Configure Jenkins Credentials

Make sure you have added the following credentials in Jenkins:

AWS Credentials → ID:

aws-ecr-credentialsEC2 SSH Key → ID:

ec2-key

These IDs are referenced in the Jenkinsfile.

7.5 Trigger the Pipeline

Save the pipeline job.

Click Build Now → Jenkins will start the CI/CD pipeline.

Check console output for logs of each stage.

Once complete, your backend will be running on EC2 at:

http://<EC2_PUBLIC_IP>:5000

This completes the automation setup for your backend deployment using Jenkins, Docker, Amazon ECR, and EC2.

Step 8: Verify Deployment on EC2

Once the Jenkins pipeline successfully completes all stages (build → push → deploy), the next step is to verify whether your backend is running correctly on your EC2 instance.

8.1 Check Running Containers on EC2

SSH into your EC2 instance manually:

ssh -i your-key.pem ubuntu@<EC2_PUBLIC_IP>

Then check the running containers:

docker ps

You should see the container named backend running with port mapping:

0.0.0.0:5000 -> 5000/tcp

8.2 Test the API Endpoint

Open your browser or run curl:

curl http://<EC2_PUBLIC_IP>:5000

If your backend has a /health or / route, you should get a response like:

{ "status": "ok", "message": "Server is running" }

This confirms the deployment is successful.

8.3 Check Application Logs

If something goes wrong, check logs:

docker logs backend

Look for:

Database connection errors

Missing environment variables

Port binding issues

Crash loops

8.4 Check MongoDB Container (If Running on EC2)

If your EC2 also hosts Mongo:

docker ps | grep mongo

If it's running through Docker Compose on EC2, make sure both containers are attached to the same network.

8.5 Debug Common Network Issues

If curl does not return a response:

Check EC2 Security Group and ensure port 5000 is open

Check Docker container port exposure

Check if the container is restarting:

docker ps -a

- Inspect container logs:

docker logs backend

Step 9: Common Issues and How to Fix Them

Even with a well configured CI/CD pipeline, you may face issues during deployment. This section covers the most common problems and how to resolve them efficiently.

9.1 Jenkins Cannot Clone GitHub Repository

Error:Authentication failed or Repository not found

Causes:

GitHub repository is private

Missing or wrong GitHub credentials

Incorrect credentialsId in Jenkinsfile

Fix:

Add GitHub credentials in Jenkins (Personal Access Token recommended).

Set the credential ID in the Jenkinsfile:

credentialsId: 'github-credentials'

9.2 Jenkins Fails to Login to ECR

Error:Cannot connect to the Docker daemon

orunauthorized: authentication required

Causes:

Jenkins is missing AWS credentials

ECR repository does not exist

Docker is not running inside Jenkins container

Fix:

Create ECR repo manually:

aws ecr create-repository --repository-name backend-serviceAdd AWS credentials in Jenkins with ID:

aws-ecr-credentialsEnsure Docker is installed and running inside Jenkins container.

9.3 Docker Build Fails in Jenkins

Common reasons:

Wrong Dockerfile path

Missing environment variables

Broken application code

Missing package.json or node_modules issues

Fix:

Verify Dockerfile is inside project root.

Run this locally to confirm Dockerfile builds:

docker build -t test-backend .

9.4 Jenkins Cannot SSH Into EC2

Error:Permission denied (publickey)

orHost key verification failed

Causes:

Wrong username (use

ubuntufor Ubuntu AMIs)Incorrect SSH key added in Jenkins

Missing SSH agent plugin

Public IP changed for EC2

Fix:

Add EC2 SSH key (

ec2-key) as type: SSH Username with Private KeyUsername should be correct:

- Ubuntu AMI:

ubuntu

- Ubuntu AMI:

Disable strict host checking in command:

ssh -o StrictHostKeyChecking=no ubuntu@<EC2_PUBLIC_IP>

9.5 EC2 Deployment Issues After Pulling Image

Error when running container:Address already in use

or backend stops immediately.

Causes:

A container is already running on port 5000

Environment variables missing

Crash due to MongoDB URI issues

Fix:

docker stop backend || true

docker rm backend || true

docker run -d -p 5000:5000 --name backend <image-url>

Check logs:

docker logs backend

9.6 Application Cannot Connect to MongoDB

Common causes:

Wrong MongoDB hostname

MongoDB not running

MongoDB is inside Docker but backend is not using the correct network

If deployed on EC2 with one container only, MongoDB URI is wrong

Fix:

If using Docker Compose locally, use:

mongodb://mongo:27017/express-mongo-appIf using external MongoDB (Atlas or EC2), use the correct connection string.

Confirm Mongo is running:

docker ps | grep mongo

9.7 Pipeline Works But EC2 Shows Old Code

Cause: Docker container on EC2 is not updated correctly.

Fix:

Ensure pipeline uses these steps:

docker pull <repo>:latest

docker stop backend || true

docker rm backend || true

docker run -d -p 5000:5000 --name backend <repo>:latest

Conclusion

In this guide, you learned how to build a complete CI/CD pipeline for deploying a backend application using Jenkins, Docker, AWS ECR, and an EC2 instance. By the end of the setup, your workflow is fully automated:

Push code to GitHub

Jenkins pulls the latest code

Jenkins builds a Docker image

The image is pushed to Amazon ECR

The latest container is deployed automatically on EC2

This approach ensures faster deployments, consistent environments, and minimal manual work. You can extend this pipeline further by adding:

Automated tests

Blue green or rolling deployments

Monitoring and alerting

HTTPS with Nginx or AWS Load Balancer

Multi environment pipelines (dev, stage, prod)

By adopting Jenkins with Docker and AWS, you make your backend deployment process more reliable, scalable, and ready for real-world production use.